Installing HPCC Systems with Istio on Microsoft Azure

Our Cloud Native blog series provides information about development plans and the progress made on the HPCC Systems Cloud Native platform. These blogs are also a useful resource for anyone looking for guidance on how to get started, often providing step by step instructions about installation and setup. The full list of resources is available on our Cloud Wiki page, which also includes interviews with HPCC Systems developers talking about the latest progress and features included.

This blog is the result of an ongoing collaboration between the HPCC Systems open source project and a team of developers at Infosys, who have been working with us as early adopters of our new Cloud Native platform. The teams meet biweekly to discuss progress on both sides, which provides valuable insights contributing back into the development process.

Look out for the second blog in this series, coming soon, which provides a tutorial guide to Installing HPCC Systems with Linkerd on Microsoft Azure, written by Nandhini Velu, a Technology Analyst also with Infosys.

Manish Kumar Jaychand (Technology Lead, Infosys) has around 10 years of experience in software development. He has been working with different projects using ECL and HPCC Systems since 2014. The projects he has worked on involved the migration of existing systems to HPCC Systems and also the development of new products in domains such as insurance and publishing. He recently achieved his certification as a Microsoft Azure developer and with his strong ECL background, he has developed a particular interest in exploring the HPCC Systems Cloud Native platform.

As early adopters of the Cloud Native version of HPCC Systems, we have embarked on an exciting collaborative journey. This blog demonstrates how to install Istio with HPCC Systems on Microsoft Azure. With the cloud version of HPCC Systems, the different nodes of traditional architecture can be compared to modern microservices. When the different HPCC Systems components are compared to microservices, the following question springs to mind:

Can we use a service mesh for internode communication within HPCC Systems?

This blog provides the answer to this question. There are various performance considerations to think about as well, but the purpose of this blog is to focus is on installing a Service Mesh (specifically Istio) with HPCC Systems and making it work.

When we talk about a service mesh the first name that comes to mind is Istio. It is a completely open source service mesh that layers transparently onto existing distributed applications. It is also a platform which includes APIs that can integrate into any logging platform, or telemetry or policy system. Istio’s diverse feature set lets you successfully and efficiently run a distributed microservice architecture and provides a uniform way to secure, connect and monitor microservices. Istio provides behavioral insights and operational control over the service mesh, offering a complete solution to satisfy the diverse requirements of microservice applications. Learn more about Istio and Service Mesh.

Creating an Azure Resource Group and AKS Cluster

First, we will create a resource group and AKS cluster on Microsoft Azure before moving ahead and downloading and installing Istio. Below are the steps for creating a resource group and AKS.

If you are new to the HPCC Systems Cloud Native platform and would like a step by step guide to installing HPCC Systems on Microsoft Azure, please read Setting up a default HPCC Systems cluster on Microsoft Azure Cloud Using HPCC Systems and Kubernetes, by Jake Smith, Enterprise/Lead Architect, LexisNexis® Risk Solutions Group.

Note: The following steps are for the Azure Cloud shell.

Creating an Azure Resource Group

To create and Azure resource group, use the following command:

az group create --name rg-hpcc --location westus

Creating an AKS Cluster

To create an AKS Cluster, use the following command:

az aks create --resource-group rg-hpcc --name aks-hpcc --node-vm-size Standard_D3

--node-count 1

Note: The size Standard_D3 is used here whereas the Standard_D2 is used in Jake Smith’s blog mentioned above. The reason for using Standard_D3 is that we need extra resources for getting the pods up with ISTIO and so must opt for a bigger size.

For kubectl and helm to interact with Azure, the kube client credentials must be configured. To do this run the following command:

az aks get-credentials --resource-group rg-hpcc --name aks-hpcc --admin

You are now ready to interact with Kubernetes in Azure

Istio Installation

The first step is to download the latest version, which may be done using the following command:

curl -L https://istio.io/downloadIstio | sh -

To download a specific version of Istio, this command may be used:

curl -L https://istio.io/downloadIstio | ISTIO_VERSION=1.6.8 TARGET_ARCH=x86_64 sh -

Next, move to the Istio package directory. The command below uses the example of moving to the istio-1.7.0 package directory:

cd istio-1.7.0

The installation directory contains the following files::

- Sample applications in samples/

- The istioctl client binary in the bin/ directory

Add the istioctl client to your path (Linux or macOS) using the following command:

export PATH=$PWD/bin:$PATH

The default and demo version of Istio will work well for microservices but will not be feasible for use with HPCC Systems and a few changes will be needed to make it work.

To install Istio, use the yaml file shown below which I saved as install-components.yaml.

apiVersion: install.istio.io kind: IstioOperator spec: meshConfig: protocolDetectionTimeout: 0s localityLbSetting: enabled: false components: egressGateways: - enabled: false name: istio-egressgateway values: pilot: enableProtocolSniffingForOutbound: false enableProtocolSniffingForInbound: false telemetry: enabled: true v2: enabled: true prometheus: enabled: true stackdriver: enabled: false sidecarInjectorWebhook: rewriteAppHTTPProbe: true

Once this file is uploaded to your cloud storage, it can be used for installing Istio. This can be done easily using the upload/download button on the Azure shell. Use the following command to install Istio:

istioctl install -f ~/install-components.yaml

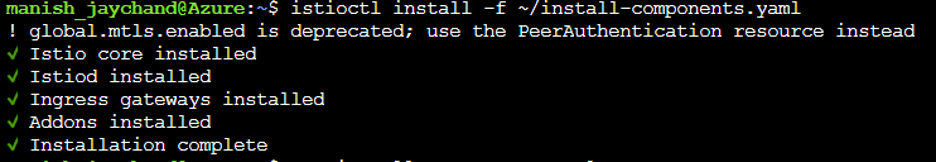

A successful installation of Istio displays the following screen:

Once Istio is successfully installed, the next step is to enable side car injection in the default namespace since we will be installing HPCC Systems in that namespace. To enable automatic side car injection during pod creation, use the following command:

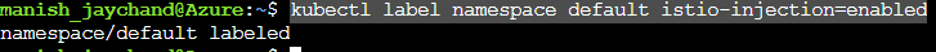

kubectl label namespace default istio-injection=enabled

The following screen is displayed showing that automatic sidecar injection is enabled:

After enabling the Istio side car injection, we can go ahead and install HPCC Systems.

To install HPCC Systems, add the helm repo and follow these steps:

helm repo add hpcc https://hpcc-systems.github.io/helm-chart/

helm install mycluster hpcc/hpcc --set global.image.version=latest --set storage.dllStorage.storageClass=azurefile --set storage.daliStorage.storageClass=azurefile --set storage.dataStorage.storageClass=azurefile

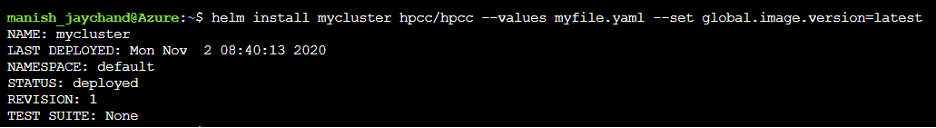

On successful installation, the following screen is displayed:

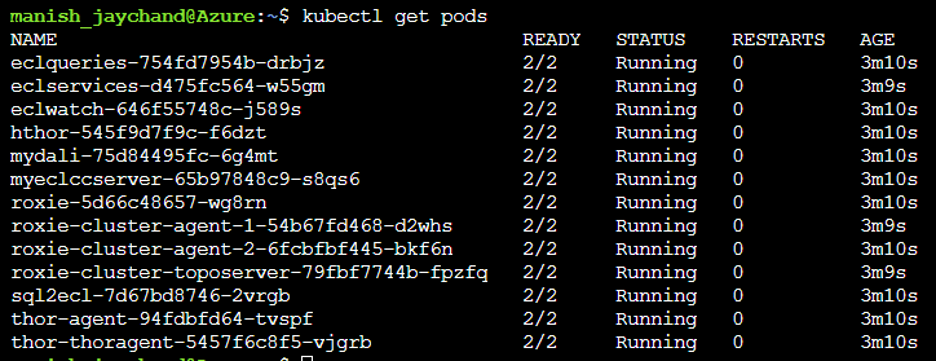

It will take a few minutes for the pods to get initialized and get started. Once this has been done, check the status using following command:

In this example, 2 pods have been started for each HPCC Systems component. One of them is the Istio proxy and the other is the actual HPCC Systems component.

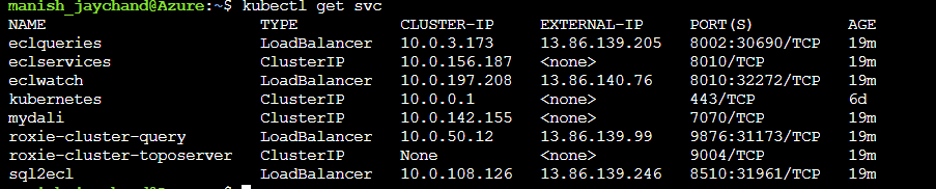

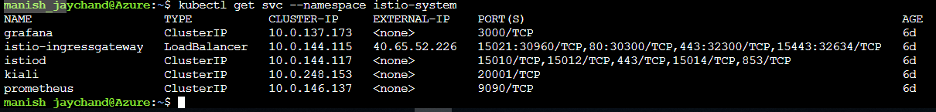

A basic version of HPCC Systems with ISTIO is now installed. The IP for ECL Watch and other components can be identified using the command show below:

Similarly, the IP addresses for the Istio components can be found using the following command:

You should now have a working version of HPCC Systems with Istio.

The different components of the Istio service mesh can now be accessed, such as using Graffana to explore different metrics and Kiali for observability and dashboard.

However, there is a known problem for sidecar life cycle management in Kubernetes. This issue is not just applicable to Istio and HPCC Systems, it is a known issue with Kubernetes in general. In terms of the impact for HPCC Systems, it affects the cleanup of processes after a request has been completed.

The new cloud-based HPCC Systems architecture does not have Thor processes running all the time. When a job is run, the Thor agent spins up a Thor manager pod which in turn launches the worker Thor nodes. These pods, which are launched on a need to use basis, are cleaned up once the request has completed.

But when using a service mesh like Istio, the Thor manager and Thor worker (which were spinned up as required) are cleaned up as expected but the Istio proxy, which was created alongside, does not get cleaned up. The following screenshots illustrate this and help explain why this is a problem.

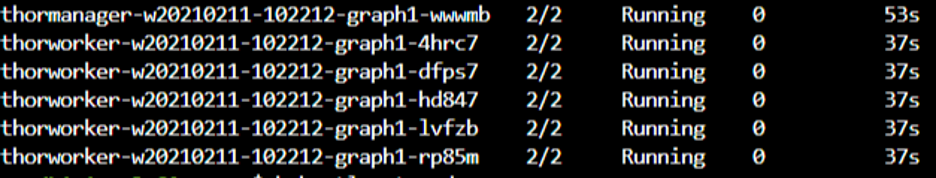

Notice the on demand pods while the job is still in progress. The 2/2 number shown below indicates that both the primary pod and the sidecar proxy are running as expected:

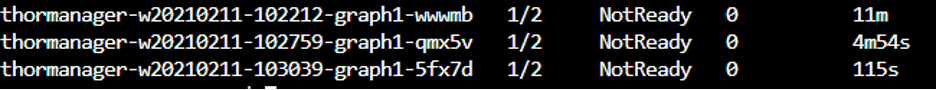

The following screen shot was taken after the job completed. The 1 /2 number shows that the primary pod has been terminated, but the sidecar proxy is still running:

So while we are currently able to run jobs with Istio installed, the pods which are not cleaned up as required will, in the end, consume all available resources. This makes it unfeasible to complete a larger analysis at this point.

This known issue in Kubernetes currently has no fix or workaround available. So, while from a functionality perspective, Istio installs correctly and works, it is not possible to fully use Istio with HPCC Systems until a fix or workaround has been provided.

If you would like to know more about this unresolved Kubernetes issue, the links to the Kubernetes GitHub repository below explain the problem in detail:

- Better support for sidecar containers in batch jobs using Kubernetes- Issue #25908

- Better support for sidecar containers in batch jobs using Istio – Issue #6324

- Sidecar Containers – Issue #753

Thank you!

Our thanks go to Manish Kumar Jaychand and the rest of the Infosys team for sharing their knowledge and experience with the HPCC Systems Open Source Community. We also thank them for their ongoing support as we work towards delivering HPCC Systems 8.0.0 (targeting early Q2), which will be the first feature complete version of our Cloud Native platform.