ECL Tips – The Top Ten ECL Compiler/Runtime Errors and How to Correct Them

This ECL Tip spotlights the top ten most common Enterprise Control Language (ECL) compiler/runtime errors, and how to correct them. Developers often encounter these specific errors while learning ECL. Most of these errors are easy to fix, but it is important to understand what the error message is saying and what needs to be corrected.

This ECL Tip spotlights the top ten most common Enterprise Control Language (ECL) compiler/runtime errors, and how to correct them. Developers often encounter these specific errors while learning ECL. Most of these errors are easy to fix, but it is important to understand what the error message is saying and what needs to be corrected.

Bob Foreman, a technical trainer at LexisNexis Risk Solutions, spotlighted The Top Ten Common ECL Compiler/Runtime Errors, during HPCC Systems Tech Talk 12, as part of his monthly “ECL Tips.” Bob is a frequent contributor to our Tech Talks, and provides valuable information on programming with Enterprise Control Language (ECL).

In this blog, we will discuss the Top Ten Common ECL Compiler/Runtime Errors, what the errors mean, and how to correct them.

ECL Error Basics

Errors in ECL fall into two categories, compiler and runtime.

- Compiler errors are related to syntax or improper references to other Definitions.

NOTE: The compiler gives descriptive instructions on how to correct errors.

- Runtime (or system) errors are errors that prevent a submitted workunit (ECL job) from completing. Runtime errors are often easily corrected.

Let’s take a look at the Top Ten ECL Compiler/Runtime Errors.

Number 10 –The Workunit Assassin

Error: System error: 10056: THOR ABORT

This runtime (system) error occurs when a workunit (ECL job) that has been submitted is aborted. An ECL job may be aborted by an administrator if there are issues, such as running too long, and/or preventing other jobs from processing in a timely manner.

To fix this error find out who killed your workunit and why, then restart your workunit when all is clear. To identify the person that aborted your workunit, go to ECL Watch and look under the “Aborted by” field.

Number 9 –Unfriended Node

Error: System error: 4: MP link closed (<ipaddress>:<port>)

This runtime error occurs when an MP (Message Passing) link is closed on a particular IP address, and one of the nodes has stopped responding. The most common cause is an Out of memory (OOM) error. This error may also be caused by a network issue, hardware fault, or a bug in the version release.

To correct this error, review your slave and system logs, and check your configuration for C++ leaks. If the problem persists, open an issue in the Jira issue tracking reports. Jira can be accessed using the following link: https://hpccsystems.atlassian.net.

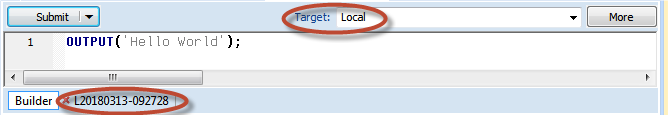

Number 8 – Local Limbo

Error: Compile/Link failed for <pathL<workunitnumber>

This compiler error occurs when you lose connection with your cluster, and the ECL IDE has reverted to a local target on a personal computer. The compiler tries to communicate with the ECL CC and other components, but the linker cannot be found locally. A common cause of this error is a loss of connection to Wi-Fi, which severs connection with the cluster.

![]()

To fix this error, restart your ECL IDE and verify cluster connection.

In the screenshot below, the Target says “Local.” If you encounter this, try to change the Target to “THOR,” “Roxie, or “HTHOR.” If you are unable to change the Target, there is a loss of connection with the cluster, and you need to reboot. Another way to determine when the ECL IDE has reverted to a local a target is to look at the workunit name. If there is an L in front of the workunit name, this indicates a local Target.

Number 7 – Missing Data Pieces (TIE)

Error: Need to supply a value for field <fieldname>

Error: Transform does not supply a value for field “SELF.<fieldname>”

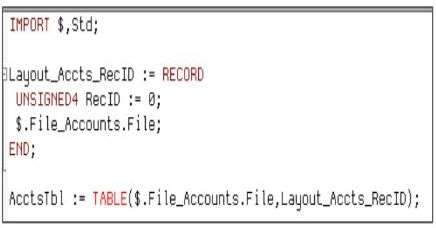

These compiler errors are very closely related. The error, “Need to supply a value for field <fieldname>,” normally occurs in syntax for the TABLE function. It indicates that a field is missing or requires a default value.

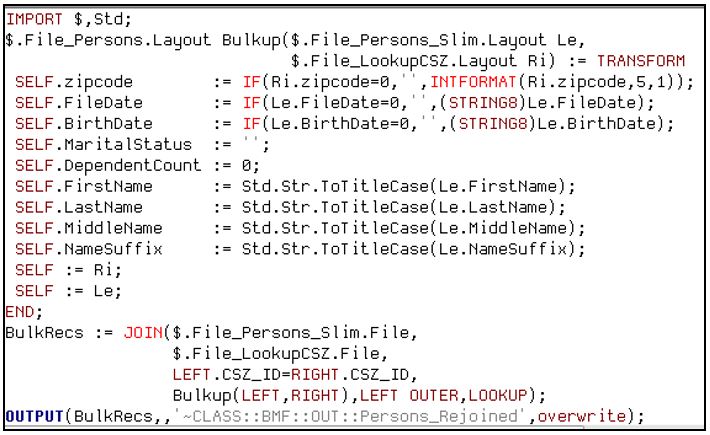

For the error, “Transform does not supply a value for field “SELF.<fieldname>”, there are one or more “SELF.field” Definition(s) missing. The target OUTPUT of the TRANSFORM has a field that is not being referenced.

To correct the TABLE function error, add the default value to TABLE, and make sure the field is referenced properly in the TRANSFORM.

When creating a TABLE, it is absolutely necessary to provide a Definition operator, as illustrated in the code below (UNSIGNED4 RecID := 0). Occasionally, a developer will create a table with fields, but no Definition operator. This results in the error, “Need to supply a value for field <fieldname>.” The rationale for the developer leaving out the Definition operator may be that its value is not yet known. When a Definition operator value is unknown, a zero or a blank value for a string is considered to be a valid default value, and will satisfy the TABLE requirements.

In this code, we have a LEFT recordset from “File_Persons_Slim.file,” a RIGHT recordset from “File_LookupCSZ.File,” and an OUTPUT that is rebuilding the record structures together. In the OUTPUT, there are fields that are not a part of the fields to the LEFT or RIGHT of the JOIN. So, records, like “Marital Status” and “Dependent Count,” have to be explicitly referenced inside of the TRANSFORM. When SELF is defined as RIGHT or LEFT, it covers all of the fields that were not explicitly defined inside the TRANSFORM. Any matching fields from INPUT to OUTPUT will automatically be typed from the INPUT of the JOIN to the OUTPUT of the TRANSFORM.

Number 6 – Divide and Conquer

System error: 0: Graph graph1[1], dedup[3]: Global DEDUP, ALL is not supported

For this runtime error, DEDUP with All was used. The compiler detected that using a global statement like DEDUP with ALL is a processor-intensive operation, and requires breaking down the job into smaller pieces to run more efficiently. Other statements that have a global option, like ITERATE and SORT, will have this type of error, as well, when used with ALL.

To correct this error, no matter how small the dataset, GROUP the target dataset, and then run the DEDUP on the GROUP reference. That will allow for a global DEDUP with ALL.

Note: Global DEDUP with ALL is normally used for smaller recordsets (10,000 records or less). In most cases a normal DEDUP is used for larger datasets.

Number 5 – Dataset Hide and Seek

System error: 10001: Graph graph1[1], Missing logical file <filename>

In this runtime error, the filename entered in the DATASET declaration does not match the name of the file sprayed or OUTPUT to the cluster.

There are two ways to create a file in the cluster: Use the ECL Watch and DFU to spray a file from the landing zone into the cluster. The OUTPUT statement can also be used to OUTPUT the file to the cluster.

To correct this error, cross check the filename specified in the DATASET declaration against the name of the file specified during the spray operation or OUTPUT Action, find and correct the typo, and check for proper use of the tilde (~).

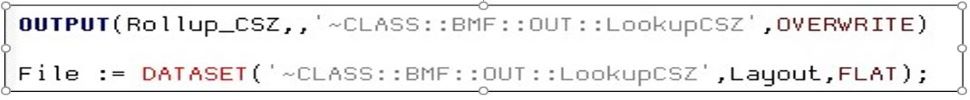

NOTE: Filenames used in DATASET Definitions and OUTPUT Actions can optionally begin with a tilde (~), meaning it is a relative path to the base folder “THOR,” otherwise it is an absolute folder path. These filenames may contain scopes delimited by double colons (::), with the final portion being the filename. It cannot have trailing double colons (::).

The presence of a leading tilde (~) in the filename allows one to override the default scope name for the cluster. In our case, the default scope name for the cluster is “THOR.”

The presence of the leading tilde in the filename only defines the scope name and does not change the set of disks to which the data is written (files are always written to the disks of the cluster on which the code executes).

In the code below, the OUTPUT has a tilde in the filename. This overrides the base folder of the cluster. If the tilde was not used, the filename would be, “THOR::CLASS::BMF::OUT::LookupCSZ.”

Number 4 – No Dataset to Read!

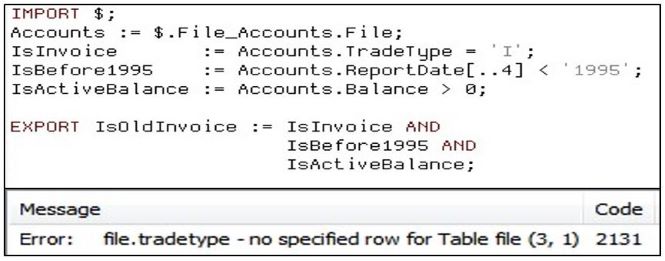

Error: file.<fieldname> – no specified row for Table file

For this compiler error, the code is trying to reference a field value from a single record when the only thing in scope is the entire dataset, or a field may be out of scope in a parent/child denormalized dataset.

To fix this error, the Definition needs to be modified to retrieve a single record in scope.

In this example code, the user is running code with a Boolean Definition. An error occurred because, initially, no value for “TradeType” was given. If that Definition is corrected, and no value was entered for the “ReportDate” Definition, there would be an error for that field as well. It gives an error for the field that contains “TradeType” because it is the first field that the compiler encounters. To correct this error, values must be entered in the aforementioned fields, as shown below. A Boolean Definition cannot be run when it does not know what value to test.

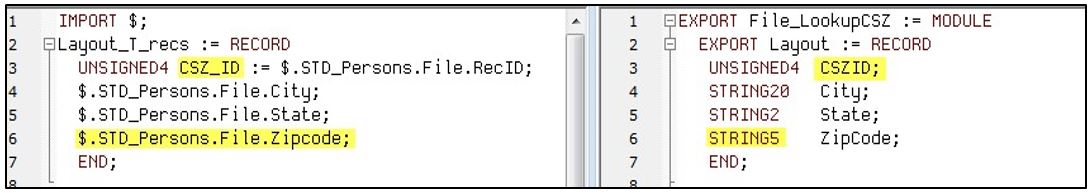

Number 3 – Data Imposters! (TIE)

Error: System error: 0: Dataset layout does not match published layout for file <filename>

Error: System error: 0: Published record size # for file <filename> does not match coded record size #

For these runtime errors, the RECORD structure Definition is not an exact to the metadata RECORD structure the DFU has for that dataset.

To correct these errors, correct field name, position, or value type.

In the example code below, on the LEFT, we have the record structure that was used to OUTPUT the recordset. The fields “CSZ_ID” and “Zipcode” are highlighted. On the RIGHT, the “CSZID” field references the same dataset, but the fieldname is spelled differently. This results in the error, “System error: 0: Dataset layout does not match published layout for file <filename>.” To correct this error, make sure that the fieldnames match.On the RIGHT side, the “Zipcode” field is a STRING5, but should be an UNSIGNED3. This results in the error, “System error: 0: Published record size # for file <filename> does not match coded record size #.” Changing STRING5 to UNSIGNED3 on the RIGHT side will fix this error.

One way to avoid these errors is to reference a common record structure for all ECL code. The user could have also used the layout in that module on the RIGHT as the OUTPUT basis, to avoid these errors. Another good practice is to have a single source for records. Do not copy and paste records all around the repository.

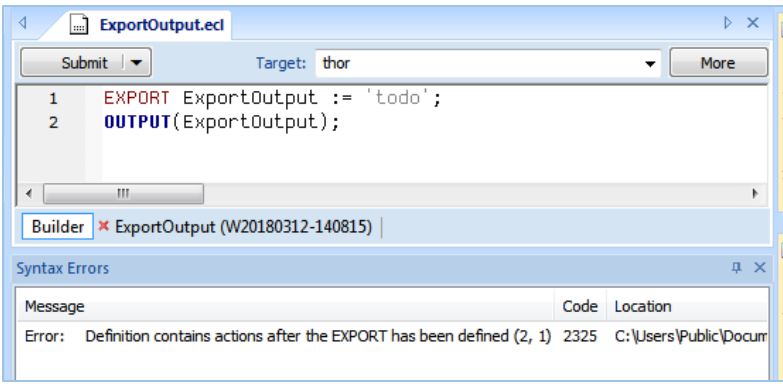

Number 2 – Action Retraction

Error: Definition contains Actions after the EXPORT has been defined

For this compiler error, the ECL code contains an Action (explicit or implicit) following an EXPORT Definition. One of the rules of ECL is that one cannot mix exported Definitions with Actions, due to side effects that could occur. Any EXPORT Definition that is not inside a MODULE structure indicates that the .ecl file is a Definition file and not a BWR (Builder Window Runnable) file.

The fundamental differences between these two file types are:

-

A Definition file always contains an EXPORT or SHARED Definition, and cannot contain any Actions.

-

A BWR file always contains at least one Action and cannot contain an EXPORT or SHARED Definition.

So this error is produced by violating Rule #1

To correct this error, remove either the Action or the EXPORT from the ECL code.

In this screenshot, the developer is attempting to EXPORT a Definition, and then OUTPUT that Definition in the same ECL file.

To fix this, comment out the OUTPUT, or comment out the EXPORT, and run the OUTPUT on the ExportOutput.

NOTE: If a developer has multiple EXPORTS, the best solution is to use a MODULE structure. The MODULE structure allows the developer to pass parameters to a set of related ECL Definitions. The Definitions may be EXPORTED or SHARED, making them visible outside the MODULE structure.

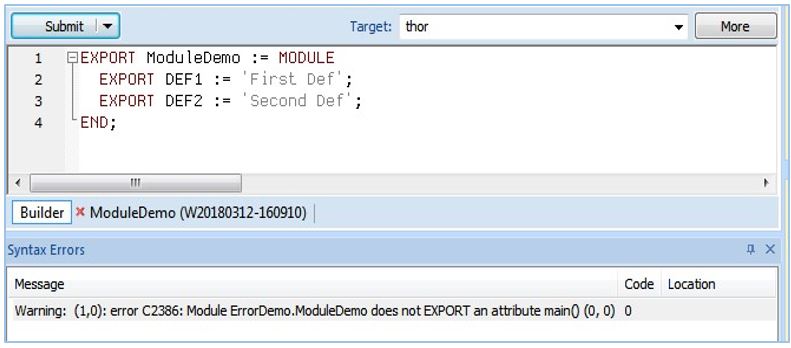

Number 1 – MODULE Mayhem!

Warning: (1,0): error C2386: Module <module name> does not EXPORT an attribute main()

This runtime error occurs when the user has an open EXPORT or SHARED Module Definition file and attempts to submit that as a workunit. The error shows up as warning, and code can normally can run with a warning. But, in this case, there will be a runtime stop on the workunit.

Pressing the Submit button on any Definition file is a very bad habit to develop, because (unfortunately) it works for some Definition types, but does NOT work for others.

The fix for this error is to use a Builder window or BWR file to explicitly drilldown to the Definition the user needs. Another way to correct the error is to rename one EXPORT Definition as “Main.” This is not recommended.

In this screenshot there are two EXPORT Definitions, DEF1 and DEF2. An error occurs when the workunit is submitted. The fix for this error is to open a BWR or Builder window and drill down to “EXPORT DEF1” by entering the following syntax: “Folder Name. ModuleDemo.DEF1.” This can also be done for “DEF2.”

The following are two common warnings that occur in ECL programming.

Honorable Mention –Warning Worries

WARNING: Compiler/Server mismatch:

Compiler: 6.4.2 community_6.4.2-1

Server: community_6.4.8-

This warning occurs when the compiler referenced in ECL IDE does not match the server version.

To correct this, update the ECL IDE or cluster version as appropriate.

The next warning is seen when using the Open Source ECL IDE.

WARNING: SOAP 1.1 fault: SOAP-ENV:Client[no subcode]

“An HTTP processing error occurred“ Detail: [no detail]

This warning occurs when the cluster is not using a shared repository.

NOTE: Before Open Source ECL IDE, all clusters used shared repositories.

This warning can be safely ignored if the user is using a local repository.

Summary

Many compiler errors are common to everyone and can be easily analyzed.

As time goes on, exposure to these common errors will point to quick and easy solutions.

Knowing what to do and where to go when an error message is not understood is critical for productivity.

About Bob Foreman

Since 2011, Bob Foreman has worked with the HPCC Systems technology platform and the ECL programming language, and has been a technical trainer for over 25 years. He is the developer and designer of the HPCC Systems Online Training Courses, and is the Senior Instructor for all classroom and WebEx/Lync based training.

If you would like to watch Bob Foreman’s Tech Talk video, “The Top Ten Common ECL Compiler/Runtime Errors, and How to Correct them,” please use the following link:

The Top Ten ECL Compiler/Runtime Errors.

Acknowledgments

A special thank you goes to Bob Foreman, Richard Taylor, and Hugo Watanuki for their guidance and valuable contributions to this blog post.