Introducing the HPCC Systems 5.4.x release series

There is a lot to say about this intermediate release.

As well as the many improvements and optimizations that make your use of the system easier and more efficient, HPCC Systems® now supports Ubuntu15.04 and CentoOS7.

Nagios monitoring and ECL Watch

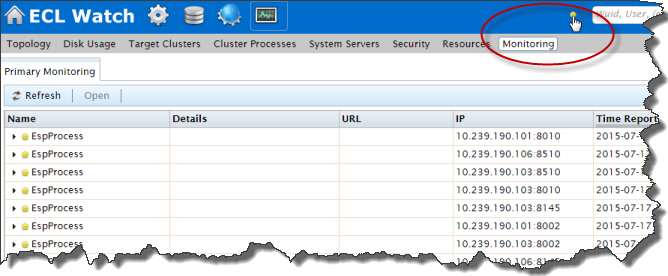

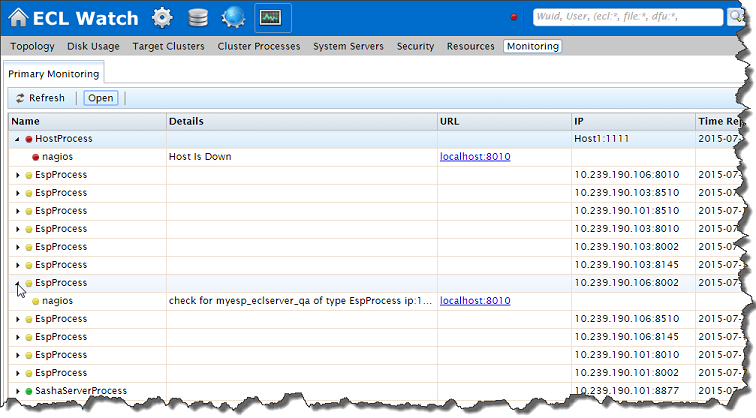

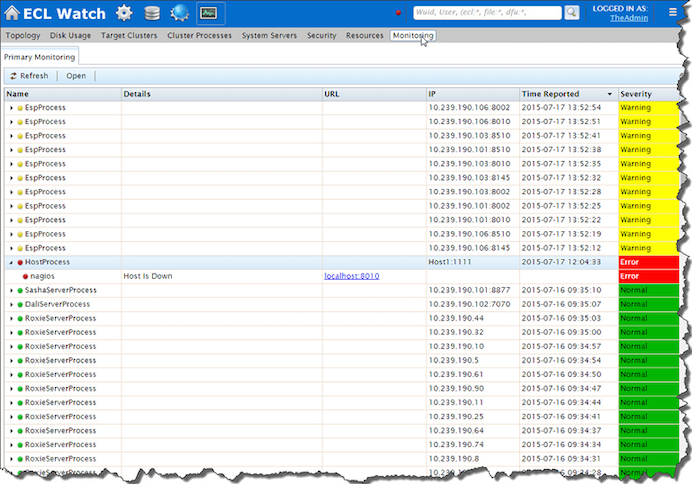

In HPCC Systems® 5.2.0, we provided command line features to generate Nagios configuration directly from the HPCC Systems® configuration file. In 5.4.0, once you have Nagios configured for your environment, you can view any associated messages immediately in ECL Watch on the Monitoring page located in the Operations area. An indicator light on the top banner changes color according to the status of your system:

Gray – No system data reported (or not configured for monitoring)

Yellow – Warnings are reported

Red – Alerts are reported

Green – System running normally

As shown here:

This brings our Nagios support in line with Ganglia monitoring support. Operators and administrators now have easy access to a whole suite of alerting and monitoring information for your HPCC Systems® environments which are completely configurable to suit your needs:

You can get the HPCC Systems® Monitoring and Reporting installation packages for Nagios and Ganglia on the HPCC Systems® website here and the supporting documentation can be found here.

Init system improvements

A number of important additions and enhancements have been made in this area. The main theme of all these changes is to simplify the init system and make the scripts and binaries more self-contained. We’ve also standardized the logging across all init scripts and cleaned up areas where there were multiple files for processes or more calls to functions than were really necessary. You can find details of all improvements in this area in the changelog for 5.4.0 but these deserve a special mention:

- We’ve added Python to the debian package requirements, so we can now use the Python socket library in some code that is used to help resolve hostnames to ips. Python 2 is a guaranteed resource on centos because it is used by the package manager (yum.) However, it wasn’t a guaranteed resource on Ubuntu machines. This is a great addition for those of you who use Python and it’s also good news for introducing more flexibility into the HPCC Systems® init system, since we can now move utility programs such as, hpcc-push, install-cluster, hpcc-run, etc over to python.

- We have added an optional flag (-p) into install-cluster.sh to enable you to copy existing keys should this be required.

- We have consolidated some often repeated commands into helper functions in hpcc_common, which are used in the configmgr script and elsewhere.

- There is now a single format for pid file names so it’s easier to glance at the /var/run/HPCCSystems/ directory and see which components are up and running.

- On starting a component, we now check whether it is still stopping and if so the new command is aborted. We added this because the collisions that happened as a result of not checking sometimes meant that the new instance might fail to start. We have also added an option to force kill to help in situations where a component is failing to stop.

- Modifications have been made to DFU Server and init script so that dfuserver STOP=1 call for shutdown is no longer required. DFU Server now shuts down using the same mechanism as all other HPCC Systems® components.

- The init thor code has been completely rewritten. This is a great change simplifying the whole of the init system in this area. Instead of having 6 scripts touching the same files asynchronously which sometimes caused data race issues, we now have just two scripts init_thor and init_thorslave. These scripts are self-contained and include all the logic from the previous versions.

- Frunssh in the run function for the thor init script has been improved so that if it fails, the failure is detected and reported immediately.

Performance improvements in the Memory Manager and Code Generator

We are always looking for ways to make our platform run faster and faster but we also like to plan for the future, making sure that any changes we make take account of new technology and more efficient ways of using the technology already available. It is with this in mind that the improvements we have made to the Memory Manager will make it possible for us to implement the ability to run all thorslaves on a single process in a future release. The changes we have made also have the happy side effect of making the platform run faster in HPCC Systems® 5.4.0!

Improvements in the code generator are focused in the areas of improving the generated code and generating that code much faster. For example, heavily nested Ifs have been improved (see https://hpccsystems.atlassian.net/browse/HPCC-12934 ) and the code generated for dictionary lookups has also been improved. We have also extended a number of specific use cases to make them more flexible:

- Scoring queries containing lots of datasets which only contain data (such as lookup tables have been improved.

- We now allow fields that are datasets to be used in the same way as other fields such as deduping. This means that you don’t have to worry about the type of field you can simply name it.

- We now support more SQL databases including time and date.

- This release includes work started to allow embedded languages to behave like disk files. Whereas embedded code used to be distributed to the first node only, you can now read records on to all nodes in parallel which means, for example, you can now access large SQL databases faster. While this is only available for read at the moment, we will be extending this to include write in a future release.

- We have added a new #option(‘obfuscate Output’). This is a new security/privacy option which allows you to publish a query to a third party but hide the details of the ECL implementation should you require it to remain private.

Improvements to the Cassandra plugin

Performance and stability have been the focus of the improvements we have made to the Cassandra plugin in HPCC Systems® 5.4.0.

It is now possible to pass in an option to indicate that results should be returned page by page. This allows fetching of the later pages to be overlapped with processing of the data retrieved in earlier pages, as well as reducing the memory that would be needed if large result sets are returned all at once. It also may mean that later values do not need to be retrieved at all if, for example, the ECL code does not pull all the rows because of a FIRSTN activity or because the ECL code fails on another branch of the execution graph.

We have also made improvements to the driver which now caches Cassandra sessions and prepared queries that use the same cluster options. This significantly reduces the overhead when making multiple calls to Cassandra within a query, or indeed from multiple queries on the same Roxie node.

The behaviour of the Cassandra driver, when bulk-adding data from an ECL dataset to a Cassandra table, has also been improved. You can now send multiple queries to Cassandra in parallel rather than waiting for each one to return before sending the next, or requiring a batch to be used. You can still use a Cassandra batch (by specifying batchmode) where it is appropriate to do so, for example, where you know that all the records being added will go to the same Cassandra partition, or where it is important that they are all added together. But please note that there are limits (at the Cassandra server end) to how large such batches may be.

ECL language features – JSON and web services

Building on the new ECL language features we added in HPCC Systems® 5.2.0, we have extended our support for JSON, adding 2 additional features into HPCC Systems® 5.4.0:

- dfuplus now supports the spraying of JSON files. This capability was previously only supported via eclwatch, but is now also available via the command line and includes the ability to spray many small files into one logical file.

- The common JSON format ‘json lines’ is now also supported.

We have also improved these ECL Language features to make them more intuitive and more robust:

- The ECL command line which is used for running, deploying and publishing ECL is now more intuitive. For example, where is the past you have used the command “ecl run myfile.ecl –target=mythor”, you can now achieve the same result using “ecl run mythor myfile.ecl”.

- It is now easier to clean up unused files after a roxie query has been deleted using the new command line option “—delete-recursive” for the “ecl roxie unused-files” command.

- The ECL function STORED in HPCC Systems<sup>®</sup> 5.2 now includes a PASSWORD option which is designed to help you safely store passwords as parameters to your workunits.

- HTTPCALL and SOAPCALL has been enhance to allow you to set one or more arbitrary HTTP headers using the HTTPHEADER keyword.

- The Cluster Type has been added to the response of the ESP API call WsTopology.TpLogicalClusterQuery. Using this call, you can find the type of every target clusters in an HPCC Systems® environment (hthor, roxie, etc). So now you can setup applications to choose actions based on the cluster type, for example, you may want ECL IDE to do some ECL syntax checks only for hthor.

A new version of WsSQL has also been released alongside 5.4.0. More to come on this in a separate blog.

Notes:

- The downloads pages for this and other HPCC Systems® releases is available here.

- More information on the use of the EMBED feature for Cassandra and other embedded languages can be found here: http://hpccsystems.com/bb/viewtopic.php?f=41&t=1509&sid=da0beb2309e68ae614cc1ac1074193fd

- More information including changelog and downloads for the most recent WsSQL release is available here.

- The RedBook items for HPCC Systems 5.4.x are located here: 5.4.x Red Book