Update – Integrating Prior Knowledge with Biomedical Natural Language Processing

Jingqing Zhang is a Ph.D. (HiPEDS) candidate at the Department of Computing, Imperial College London, London, UK, under the supervision of Professor Yi-Ke Guo. His research interests include Natural Language Processing, Text Mining, Data Mining, and Deep Learning. Jingqing received his MRes degree in Computing from Imperial College with Distinction in 2017 and BEng in Computer Science and Technology from Tsinghua University in 2016. He has been an essential part of the HPCC Systems Academic program throughout his masters and doctoral studies.

Jingqing Zhang is a Ph.D. (HiPEDS) candidate at the Department of Computing, Imperial College London, London, UK, under the supervision of Professor Yi-Ke Guo. His research interests include Natural Language Processing, Text Mining, Data Mining, and Deep Learning. Jingqing received his MRes degree in Computing from Imperial College with Distinction in 2017 and BEng in Computer Science and Technology from Tsinghua University in 2016. He has been an essential part of the HPCC Systems Academic program throughout his masters and doctoral studies.

In this blog, we discuss Jingqing’s latest research in biomedical natural language processing. This research specifically focuses on using prior knowledge to improve the capability of deep learning models to handle free text in a biomedical domain. We also touch on Jingqing’s prior research in traffic prediction and text classification.

Natural Language Processing

In the last decade, there has been extensive study of natural language processing, with the application of deep learning technology. Several fundamental deep neural networks such as Recurrent Neural Networks (RNN), LSTM and Transformers, have been designed to model human languages. These neural networks were designed using statistical and probabilistic techniques and are sequential and context-aware. In addition to linguistic patterns, the understanding and use of natural languages also rely on common sense. This is called prior knowledge.

In the last decade, there has been extensive study of natural language processing, with the application of deep learning technology. Several fundamental deep neural networks such as Recurrent Neural Networks (RNN), LSTM and Transformers, have been designed to model human languages. These neural networks were designed using statistical and probabilistic techniques and are sequential and context-aware. In addition to linguistic patterns, the understanding and use of natural languages also rely on common sense. This is called prior knowledge.

Prior Knowledge

For this project, prior knowledge was categorized into two types:

- Structured knowledge

This can be explicitly defined by structured data, such as knowledge graphs and clinical ontologies. It typically defines the relations between entities clearly. For example, London is the capital city of the United Kingdom, or lung cancer is a sub-class of neoplasm. Structured knowledge has been proven to be useful in tasks that require reasoning and understanding.

- Unstructured knowledge

Which can be implicitly contained in large text corpus, such as news articles, blogs, books, Wikipedia, and more. In recent years, the pre-training techniques have shown great success when using unstructured knowledge to boost the accuracy of deep learning models in a variety of NLP tasks. Prior knowledge can be useful to domains that have many expert-curated knowledge bases and free text. The biomedical domain is one such domain.

Two recent works provide case studies using the two types of prior knowledge in biomedical natural language processing:

Project 1: Self-supervised phenotype annotation on Electronic Health Records with semantic representation and human phenotype ontology.

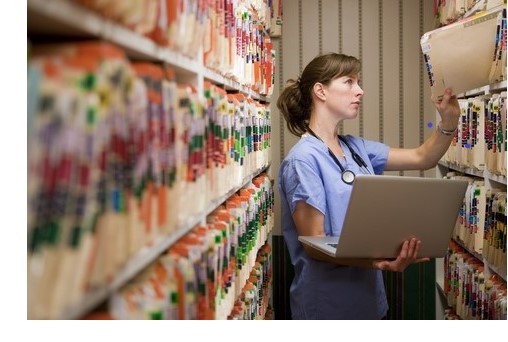

Electronic health records (EHRs) are the digital version of patients’ paper charts. These records are real-time and patient-centered. Heterogeneous data elements contained in EHRs include medical history, symptoms, diagnoses, medication, treatment plans, laboratory test results, and radiology imaging. With the increasing adoption of EHRs in hospitals, the valuable information archived in EHRs has been found to be useful in clinical informatics applications. This research focused on extracting phenotype information from EHR textual datasets for better disease understanding. In medical terms, the word “phenotype” refers to deviations from normal morphology, physiology, or behavior. The EHRs serve as a rich and valuable source of phenotype information, as they naturally describe phenotypes of patients in narratives.

Electronic health records (EHRs) are the digital version of patients’ paper charts. These records are real-time and patient-centered. Heterogeneous data elements contained in EHRs include medical history, symptoms, diagnoses, medication, treatment plans, laboratory test results, and radiology imaging. With the increasing adoption of EHRs in hospitals, the valuable information archived in EHRs has been found to be useful in clinical informatics applications. This research focused on extracting phenotype information from EHR textual datasets for better disease understanding. In medical terms, the word “phenotype” refers to deviations from normal morphology, physiology, or behavior. The EHRs serve as a rich and valuable source of phenotype information, as they naturally describe phenotypes of patients in narratives.

Several standardized knowledge bases were proposed to help clinicians understand phenotype information in EHRs formally, systematically, and consistently. Human Phenotype Ontology (HPO), a standardized and widely used knowledge base of phenotypes, provides over 15,000 terms. Given that annotating phenotypes from millions of EHRs manually is extremely expensive and impractical, automatic annotation techniques based on natural language processing (NLP) are needed.

For this work, a novel self-supervised deep learning model to annotate phenotypic abnormalities from EHRs was proposed. Without using any labeled data, the framework was designed to integrate expert-curated phenotype knowledge in HPO. It was assumed that the semantics of EHRs is a composition of the semantics of phenotypes. Based on this assumption, a Transformer-based encoder was constructed to learn and constrain the semantic representation of EHRs and phenotypes. The goal was to learn which phenotypes are mentioned and semantically more important in EHR narratives.

The experiments were conducted, based on three datasets, including MIMIC-III, COVID-19 reports, and N2C2, and compared with baseline phenotyping models, including NCR, Clinphen, NCBO, cTAKES, MetaMap, MetaMapLite, MedCAT. The proposed deep learning model achieved state-of-the-art accuracy against all the baseline models on the three datasets. On the MIMIC-III dataset, the proposed model achieved at least a 20% boost in accuracy.

Part of this work was published at BIBM 2019.

Project 2: PEGASUS: pre-training with extracted gap-sentences for abstractive summarization.

Text summarization aims at generating accurate and concise summaries from input document(s). In contrast to extractive summarization, which merely copies informative fragments from the input, abstractive summarization may generate novel words. A good abstractive summary covers principal information in the input and is linguistically fluent.

Recent work pre-training Transformers with self-supervised objectives on large text corpora has shown great success when fine-tuned on downstream NLP tasks, including text summarization. However, pre-training objectives tailored for abstractive text summarization have not been explored. In this work, pre-training objectives, specifically for abstractive text summarization, were studied, and 12 downstream datasets spanning news, science (including biomedical literature from PubMed), short stories, instructions, emails, legislative bills, and patents were evaluated.

Gap sentences generation (GSG), tailored for text summarization, was proposed as a pre-training objective. It was hypothesized that using a pre-training objective that more closely resembles the downstream task leads to better and faster fine-tuning performance. Given the intended use for abstractive summarization, GSG involves generating summary-like text from an input document. To leverage massive text corpora for pre-training, whole sentences were selected and masked from documents, and gap-sentences were concatenated into a pseudo-summary.

The effects of the pre-training corpora, gap-sentences ratios, and vocabulary sizes were demonstrated and the best configuration to achieve state-of-the-art results on all 12 diverse downstream datasets considered was scaled up. On biomedical literature data from PubMed, the proposed model achieved a ROUGE1-F1 score of 45.97 which is better than the previous state-of-the-art score of 40.59.

The work is published at ICML 2020.

Work with HPCC Systems

For his projects, HPCC Systems was integrated with TensorLayer. TensorLayer is a deep learning framework based on TensorFlow and provides moderate-level abstraction of APIs that enables researchers and engineers to develop customized deep learning models easily and quickly.

In 2017, TensorLayer was awarded Best Open Source Software by the ACM Multimedia Society. TensorLayer was tested with ECL and Python embeds in HPCC Systems and, showed comparable performance and good compatibility. In addition, HPCC Systems with a Python embed was used to pre-process and clean the MIMIC-III clinical database, which is used as an input for the deep learning models.

Previous Projects

Prior to his research in biomedical natural language processing, Jingqing worked on a project that explored the application of deep content learning in traffic data. In the two studies published at KDD 2018 and ACM Multimedia 2018, the project team demonstrated that sequence learning with external auxiliary information, such as the number of search queries from mobile map app and traffic network, can improve the accuracy of traffic speed prediction, given historical traffic data. This solution could provide useful insight for traffic management in a metropolitan area and suggest a use case of big data in future smart city traffic planning.

Prior to his research in biomedical natural language processing, Jingqing worked on a project that explored the application of deep content learning in traffic data. In the two studies published at KDD 2018 and ACM Multimedia 2018, the project team demonstrated that sequence learning with external auxiliary information, such as the number of search queries from mobile map app and traffic network, can improve the accuracy of traffic speed prediction, given historical traffic data. This solution could provide useful insight for traffic management in a metropolitan area and suggest a use case of big data in future smart city traffic planning.

More information about these projects is available using the following resources:

- Article on “Deep Sequence Learning with Auxiliary Information for Traffic Prediction” (KDD 2018)

- Link to the Q-Traffic dataset

- TensorLayer webpage

- Deep Sequence Learning in Traffic Prediction and Text Classification – video clip from HPCC Systems Tech Talk 15 webcast

Acknowledgments

Jingqing Zhang’s Masters and Ph.D. research was sponsored by the HPCC Systems Academic Program. He was supported in the use of our big data platform by our LexisNexis Risk Solutions Group colleagues, Roger Dev, Jacob Cobbett-Smith, and Mark Kelly.

We would also like to acknowledge Professor Yi-Ke Guo, Founding Director of the Data Science Institute at Imperial College London, who is Jingqing’s supervisor.

Jingqing’s contributions to the HPCC Systems open source project have been invaluable. Those who have worked with Jingqing over the past four years have great respect for this young man and his phenomenal work. Lorraine Chapman, Manager of the HPCC Systems Intern Program and representative of our Academic Program had this to say about our collaboration with Jingqing:

“It has been extremely interesting and rewarding to work with Jingqing Zhang as part of our Academic Program outreach. He has been using HPCC Systems to ingest and process data and was one of the first to experiment using HPCC Systems with TensorFlow. Every time one project was nearing completion, he always had new ideas lined up for the next phase pushing against the boundaries of what may be possible. His contributions to our open source project include blogs for our website, published papers, conference presentations and he has been a judge for our poster contest. I wish Jingqing every success. I have no doubt with his knowledge, drive, and talent, a bright future lies ahead.”