Using HPCC Systems Machine Learning

In my previous blog Machine Learning Demystified, I reviewed the basic concepts and terminology of Machine Learning.

This article introduces the HPCC Systems Machine Learning Bundles and provides a tutorial on how to apply these capabilities to your data.

It assumes that you are familiar with Machine Learning concepts (if not, or for a refresher, read my previous blog ) and have at least some basic ECL programming skills, which you can get by reading our ECL documentation or taking an online training course.

We begin with a brief overview of the bundles and then provide a tutorial example using one of the bundles.

Machine Learning Bundle Overview

- ML_Core

Provides the core data definitions and attributes for ML. It is a prerequisite for all of the other production bundles. See the HPCC Systems ML_Core repository on GitHub. - PBblas – Parallel Block Linear Algebra Subsystem.

Provides distributed, scalable matrix operations used by several of the other bundles. Can also be used directly whenever matrix operations are in order. This is a dependency for several of the other bundles. See the HPCC Systems PBblas repository on GitHub. - LinearRegression

Ordinary Least Squares Linear Regression for use as a ML algorithm or for other uses such as data analysis. See the HPCC Systems Linear Regression repository on GitHub. - LogisticRegression

Classification using Logistic Regression methods — both Binomial (two-classes) and Multinomial (multiple classes). In spite of the name, Logistic Regression is a Classification method, not a Regression method. See the HPCC Systems Logistic Regression repository on GitHub. - GLM

General Linear Model. Provides Regression and Classification algorithms for situations in which your data does not match the assumptions of LinearRegression or LogisticRegression. Handles a variety of data distribution assumptions. See the HPCC Systems GLM repository on GitHub. - SupportVectorMachines

SVM implementation for Classification and Regression using the popular LibSVM under the hood. See the HPCC Systems Support Vector Machines repository on GitHub. - LearningTrees

Random Forest based Classification and Regression. See the HPCC Systems Learning Trees repository on GitHub. - ecl-ml

This is the original HPCC Machine Learning bundle. It contains a wide range of Machine Learning algorithms at various levels of productization (i.e. documentation, reliability, and performance). Although it is no longer formally supported, it is still occasionally contributed to, and typically contains the latest and greatest experimental algorithms. It is useful when you need access to algorithms that are not yet supported by the production bundles. See the HPCC Systems ecl-ml repository on GitHub. Note that, due to the restrictions on bundle naming, this does not install properly as a bundle. You must download it into your development area to use.

The production bundles (excluding the supporting bundles ML_Core and PBblas) provide a very similar core interface for Machine Learning. That said, each algorithm has its own quirks (assumptions and restrictions) that must be taken into account. It is important to read the documentation that comes with each bundle in order to use that bundle effectively.

I have chosen LearningTrees (i.e. Random Forests) as the example bundle to use in this tutorial for a number of reasons:

- It is widely considered to be one of the easiest algorithms to use. Why?

- It makes very little assumption about the data’s distribution or its relationships

- It can handle large numbers of records and large numbers of fields

- It can handle non-linear and discontinuous relations

- It almost always works well using the default parameters and without any tuning

- It scales well on HPCC Systems clusters of almost any size

- It’s prediction accuracy is competitive with the best state-of-the-art algorithms

In the spirit of demystifying Machine Learning, let me try to explain the Random Forest algorithm in a few sentences.

- Random Forests are based on Decision Trees.

- In Decision Trees, you ask a hierarchical series of questions about the data (think a flowchart) until you have asked enough questions to split your data into small groups (leaves) that have a similar result value. Now you can ask the same set of questions of a new data point, and return the answer that is representative of the leaf into which is falls.

- Random Forest builds a number of separate and diverse decision trees (a decision forest) and average the results across the forest. The use of many diverse decision trees reduces overfitting since each tree is fit to a different subset of the data, and it will therefore incorporate different “noise” into its model. The aggregation across multiple trees (for the most part) cancels out the noise.

Tutorial Example

This tutorial example demonstrates the following:

- Installing ML

- Preparing Your Data

- Training the Model

- Assessing the Model

- Making Predictions

Installing ML

- Be sure HPCC Systems Clienttools is installed on your system.

-

Install HPCC Systems ML_Core

From your clienttools/bin directory run:ecl bundle install https://github.com/hpcc-systems/ML_Core.git

- Install the HPCC Systems LearningTrees bundle. Run:

ecl bundle install https://github.com/hpcc-systems/LearningTrees.git

Note that for PC users, ecl bundle install must be run as Admin. Right click on the command icon and select “Run as administrator” when you start your command window.

Easy! Now lets get your data ready to use.

Preparing Your Data

I assume that your data is reasonable clean (if not, you may need to read about data-scrubbing, which is outside of the scope of this article) and is stored in a standard record-oriented dataset such as:

myData := DATASET([...], MyRecordLayout);

I also assume that your data contains all numeric values, and that the first field of your record is an unsigned integer recordId of some sort. It is necessary that any textual labels (e.g. gender) have been converted to numeric values (e.g., “female” -> 1, “male” -> 2).

I’m further assuming that your data contains both the Independent Variables and the Dependent Variable (the variable you want learn to predict).

The first step is to segregate your data into a training set and a testing set. It is critical that you reserve some of your data for testing, as it is a very bad idea to test your model on the same data on which you trained it! Note that if your data is limited and you want to use all of it for training, there is a way to have your cake and eat it too using N-Fold Cross Validation. This is beyond the scope of this tutorial, but you can learn about it at ML_Core.CrossValidation.ecl.

When segregating your data, it is important to randomly sample from your dataset rather than using the first N records in the set, because there may be some hidden order in the process that generated the data. This is easily accomplished using ECL:

// Extended data format MyFormatExt := RECORD(MyFormat) UNSIGNED4 rnd; // A random number END; // Assign a random number to each record myDataE := PROJECT(myData, TRANSFORM(MyFormatExt, SELF.rnd := RANDOM, SELF := LEFT)); // Shuffle your data by sorting on the random field myDataES := SORT(myDataE, rnd); // Now cut the deck and you have random samples within each set // While you're at it, project back to your original format -- we dont need the rnd field anymore myTrainData := PROJECT(myDataES(1..5000), MyFormat); // Treat first 5000 as training data. Transform back to the original format. myTestData := PROJECT(myDataES(5001..7000), MyFormat); // Treat next 2000 as test data

As a general rule of thumb, about 20 – 30% of your data should be reserved for testing.

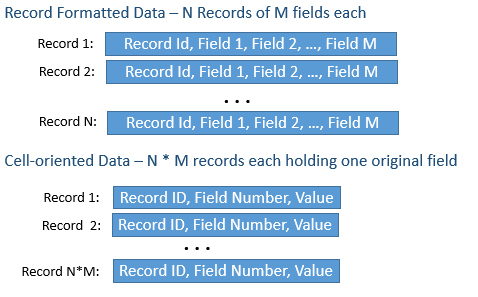

The next step is to convert your data to the form used by the ML bundles. In order to generically operate on any data, ML requires the data in a cell-oriented matrix layout known as the NumericField. The figure below illustrates the correspondence between your record-oriented data and the cell-oriented NumericField data.

ML_Core provides a macro, ToField, that makes that easy:

IMPORT ML_Core; ML_Core.ToField(myTrainData, myTrainDataNF);

At this point, myTrainDataNF will contain your converted training data. Note that the macro does not return the converted data but creates an new attribute in-line that contains it.

Do the same for your test data:

ML_Core.ToField(myTestData, myTestDataNF);

Now we want to separate the Independent Variables and the Dependent Variables for each set so that we can handle them separately.

Let’s assume that your data has 10 fields (excluding the record id) and that your dependent variable is in the 10th field:

myIndTrainDataNF := myTrainDataNF(number < 10); // Number is the field number myDepTrainDataNF := PROJECT(myTrainDataNF(number = 10), TRANSFORM(RECORDOF(LEFT), SELF.number := 1, SELF := LEFT));

Note that using the PROJECT to set the number field = 1 is not strictly necessary, but is good practice. This indicates that it is the first field of the dependent data. Since there is only one Dependent field, we number it accordingly.

Now we do the same for the training data:

myIndTestData = myTestDataNF(number < 10); myDepTestData := PROJECT(myTestDataNF(number = 10), TRANSFORM(RECORDOF(LEFT), SELF.number := 1, SELF := LEFT));

Our data is now ready to train the model and assess our results. We’ll do this first for Regression and then for Classification.

Fitting the Model and Assessing the Results

Regression

First we define our learner:

IMPORT LearningTrees AS LT; myLearnerR := LT.RegressionForest() // We use the default configuration parameters. That usually works fine.

Now we use that learner to train and retrieve the model:

myModelR := myLearnerR.GetModel(myIndTrainData, myDepTrainData);

Note that the model is a opaque data structure (interpretable only by the bundle) that encapsulates all the results of the training.

Now I can use that model to make predictions. That is, to predict the dependent variable of the test data given the independent variables.

predictedDeps := myLearnerR.Predict(myModelR, myIndTestData);

We want to test those predictions now against the myDepTestData, which we previously extracted from the segregated test records.

ML_Core has a way to do that using its Analysis module. It provides a generic assessment of the predictive power of the model. There are also a wide range of other assessment metrics provided by the individual bundles that are specific to the algorithms of that bundle. See the documentation for the individual bundles.

assessmentR := ML_Core.Analysis.Regression.Accuracy(predictedDeps, myDepTestData);

AssessmentR now contains a Regression_Accuracy record whose fields provide metrics on the regression’s accuracy. See ML_Core.Types.Regression_Accuracy for details.

In this case:

- R2 (R-Squared) — The “Coefficient of Determination”. Roughly, the proportion of the variance in the original data that was captured by the regression. R-Squared can range from slightly negative to 1. An R-Squared of 1 means that the predictions perfectly match the actual. R-Squared of zero or below means that the model has no predictive value. R-Squared of .5 means that half of the data’s variance was explained by the model.

- MSE (Mean Squared Error) — The average squared deviation between the predicted and actual values.

- RMSE (Root Mean Squared Error) — The square root of the MSE. This is an expectation of the average error on a given sample.

Got Regression? Now let’s do the same for Classification

Classification

Using Classification is very similar to using Regression with a few minor twists:

- Classification expects Dependent variables to be unsigned integers representing discrete class labels. It therefore expresses Dependent variables using the DiscreteField layout, rather than NumericField (see ML_Core.Types.NumericField).

- Classification exposes a “Classify” function rather that the “Predict” function provided by Regression

- Classification provides a different set of assessment metrics. It turns out that assessing a classification is more complicated than a regression. That is due to the discrete nature of the results.

Let’s go through the process here. We will assume, for this exercise, that the tenth field of your original dataset contained an integer “label”, representing the class associated with each record whose mapping we want to capture.

So we need to convert the NumericField records containing those class labels into DiscreteField records. ML_Core provides a convenient way to do this:

IMPORT ML_Core.Discretize;

myDepTrainDataDF := Discretize.ByRounding(myDepTrainData);

And likewise for the Test data while we’re at it:

myDepTestDataDF := Discretize.ByRounding(myDepTestData);

Let’s create our learner:

myLearnerC := LT.ClassificationForest();

Now we can train the model (just like regression)

myModelC := myLearnerC.GetModel(myIndTrainData, myDepTrainDataDF); // Notice second param uses the DiscreteField dataset

Now we predict the classes based on the test data

predictedClasses := myLearnerC.Classify(myModelC, myIndTestData);

And now we assess it:

assessmentC := ML_Core.Analysis.Classification.Accuracy(predictedClasses, myDepTestDatDF) // Both params are DF dataset

The returned record (assessmentC) contains a Classification_Accuracy record (See ML_Core.Types.Classification_Accuracy for details). That record contains the following metrics:

- RecCount – The number of records tested

- ErrCount – The number of mis-classifications

- Raw_Accuracy – The percentage of records that were correctly classified

- PoD (Power of Discrimination) – How much better was the classification than choosing one of the classes randomly?

- PoDE (Extended Power of Discrimination) – How much better was the classification than always choosing the most common class?

Say you got a raw accuracy of .6. You predicted the correct class 60% of the time. Pretty good? Well if there are two classes, then chance would make you right 50% of the time. So your only a little better than a random guess (PoD would be .2). Not quite as good as the raw accuracy seemed. Now suppose that seventy percent of the records were of class 1 and thirty percent were class 2. If we always predicted class 1, we would have been right 70% of the time, so our model predictions are significantly worse than always guessing 1! Our PoDE in this case would reflect that, with a reading of (-.333). An effective model should be positive on all three of these metrics. If you can’t do that, then the best prediction is the most common class.

There are a few other classification assessment tools in the Analyis module which I list here, but leave it to you to explore:

- Confusion Matrix – Which classes are most commonly confused?

- AccuracyByClass – Precision, Recall and False-Positive-Rate for each class. What percentage of the predictions of this class were actually of this class (i.e precision)? What percentage of the samples that were actually of this class were predicted to be of this class (i.e. recall)? What percentage of the samples that were not of this class were predicted to be of this class (i.e. false-positive-rate)?

Making Predictions

Making predictions using your model is now easy. You can expect that predictions on brand new datapoints will exhibit about the same accuracy as you got on your assessment using your segregated test data. This expectation, however, has an important assumption built-in: That your test data is representative of the population whose values you will try to predict. This is a subtler assumption than might be apparent at first glance. Suppose my training / test data was taken from a mature forest plot. Now suppose I use the trained model to predict values for a forest plot containing saplings. which I didn’t encounter in the original plot. How good do you think the model will do when presented with data from a young forest?

Or suppose I am trying to detect fraudulent transactions in a series of transactions, and in my training set, I labeled each transaction as fraudulent and not fraudulent. Seem good? Well, technically, the population that I’m trying to learn about is the set of good transactions plus the set of all possible fraudulent transactions. Since my training data only contains some types of fraudulent transactions and not all possible types, it is not truly representative of the population. So the best you can say, given your test results is that, with a certain accuracy you can predict fraudulent transactions that are similar to the ones in your training set. You could get a black-eye if you claimed that you could find 60% of fraudulent transactions.

That said, using the model to make predictions is technically easy. In fact we’ve already done it as part of assessing the model.

Assume we have a brand new set of samples (i.e. Independent Variables): myNewIndData

For regression:

predictedValues := myLearnerR.Predict(myModelR, myNewIndData);

or for classification

predictedClasses := myLearnerC.Classify(myModelC, myNewIndData);

Conclusion

You now know everything you need to know to build and test ML models against your data and to use those models to predict qualitative or quantitative values (i.e. classification or regression). If you want to use a different ML bundle, you’ll find that all the bundles operate in a very similar fashion, with minor variation. We’ve utilized only the most basic aspects of the ML bundles. Future blogs will delve into the ins and outs, one bundle at a time. Please note that not all of the HPCC Systems ML bundles are fully consistent with the interface described above. You will notice slight variations in the interface signatures, but the basic methods and flows are consistent. Future revisions of each bundle will bring them fully in line with the above example.

As always, I include my obligatory warning:

ML’s conceptual simplicity is somewhat misleading. Each algorithm has its own quirks, (assumptions and constraints) that need to be taken into account in order to maximize the predictive accuracy. Furthermore, quantifying the efficacy of the predictions require skills in data-science and statistics that most of us do not possess. For these reasons, it is very important that we do not use our exploration of ML to produce products or make claims on abilities without first consulting experts in the field.

You are now considered armed and dangerous. Have fun!

Find out more…

- Read Roger’s introductory blog which focuses on Demystifying Machine Learning.

- Find out about Using PBblas on HPCC Systems

- We have restructured the HPCC Systems Machine Learning Library. Find out more about this and future improvements.